- Blog

- Rose tattoo discography rar

- Shooting games for mac free download

- Emulator windows na mac os x

- Mac gameboy emulator games

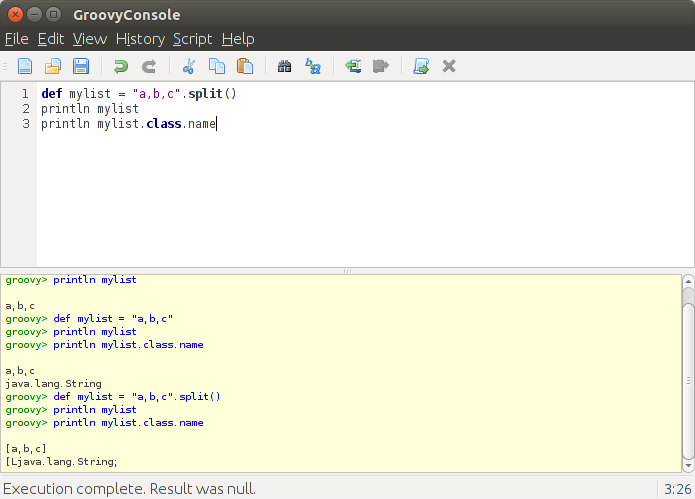

- Bash script example for running java classes mac

- Tenali raman stories in malayalam pdf

- Verbotenes verlangen movie

- Pfsense 2-0-3 iso

- Windows 7 pro oa lenovo singapore iso download

- Lotr bfme 2 pc download

- Bully scholarship edition save game chapter 4 download

- Best emulator mac pc games

- Csn yoi lofelink off halars dmg

- Sonic adventure 2 emulator for mac

- Download gintama episode lengkap sub indo movie

- Ghostbusters the videogame pc serial number

- Rebuild the outlook for mac 2016 database to resolve problems

- Buku keperawatan jiwa pdf download

- #BASH SCRIPT EXAMPLE FOR RUNNING JAVA CLASSES MAC INSTALL#

- #BASH SCRIPT EXAMPLE FOR RUNNING JAVA CLASSES MAC ZIP FILE#

- #BASH SCRIPT EXAMPLE FOR RUNNING JAVA CLASSES MAC FULL#

- #BASH SCRIPT EXAMPLE FOR RUNNING JAVA CLASSES MAC SOFTWARE#

When running as an AWS Batch job, it is passed the contents of the command parameter.

The following link pulls the latest version. To get started, download the source code from the aws-batch-helpers GitHub repository. It includes a simple script that reads some environment variables and then uses the AWS CLI to download the job script (or zip file) to be executed. The fetch & run Docker image is based on Amazon Linux. For more information, see Installing the AWS Command Line Interface.

#BASH SCRIPT EXAMPLE FOR RUNNING JAVA CLASSES MAC INSTALL#

Alternatively, you could easily launch an EC2 instance running Amazon Linux and install Docker. You also need a working Docker environment to complete the walkthrough. This is a private repository by default and can easily be used by AWS Batch jobs For this post, register this image in an ECR repository. If this is the first time you have used AWS Batch, you should follow the Getting Started Guide and ensure you have a valid job queue and compute environment.Īfter you are up and running with AWS Batch, the next thing is to have an environment to build and register the Docker image to be used.

#BASH SCRIPT EXAMPLE FOR RUNNING JAVA CLASSES MAC FULL#

AWS Batch plans, schedules, and executes your batch computing workloads across the full range of AWS compute services and features, such as Amazon EC2 Spot Instances.

#BASH SCRIPT EXAMPLE FOR RUNNING JAVA CLASSES MAC SOFTWARE#

With AWS Batch, there is no need to install and manage batch computing software or server clusters that you use to run your jobs, allowing you to focus on analyzing results and solving problems. AWS Batch dynamically provisions the optimal quantity and type of compute resources (e.g., CPU or memory optimized instances) based on the volume and specific resource requirements of the batch jobs submitted. AWS Batch overviewĪWS Batch enables developers, scientists, and engineers to easily and efficiently run hundreds of thousands of batch computing jobs on AWS. AWS Batch then launches an instance of your container image to retrieve your script and run your job.

#BASH SCRIPT EXAMPLE FOR RUNNING JAVA CLASSES MAC ZIP FILE#

You build a simple Docker image containing a helper application that can download your script or even a zip file from Amazon S3. AWS Batch executes jobs as Docker containers using Amazon ECS. This post details the steps to create and run a simple “fetch & run” job in AWS Batch. However, sometimes you might just need to run a script! These images allow you to easily share complex applications between teams and even organizations. Updated on Apto reflect changes in IAM create role process.ĭougal Ballantyne, Principal Product Manager – AWS Batchĭocker enables you to create highly customized images that are used to execute your jobs.